Google Gemini Alternatives for Modern Enterprises

Why Enterprises Are Looking Beyond Google Gemini

Google Gemini is a powerful generative AI model that has earned its place alongside ChatGPT and Claude as one of the top-tier LLMs available today. Its integration with Google Workspace, real-time grounding via search, and strong capabilities in reasoning, summarization, and code generation make it an appealing choice for many organizations.

However, when it comes to scaling generative AI across the enterprise, leaders in IT and business functions are beginning to evaluate alternatives. The reason is simple: while Gemini offers strong performance, the enterprise-grade version—Gemini for Workspace—comes at a premium. Licensing costs can quickly balloon when trying to roll out access across departments. For IT teams managing budgets and compliance obligations, this creates a dilemma: continue investing in a single vendor’s ecosystem, or explore more flexible options that provide similar capabilities with better cost and control?

Enterprises today need more than just performance. They require tools that are secure, customizable, cost-effective, and compliant with evolving regulations. That’s why many are exploring Google Gemini alternatives that include a broader orchestration layer—one that supports multiple LLMs, enforces governance, and helps teams select the right model for the right task.

In the sections ahead, we will examine the top Gemini alternatives for enterprises, analyze how different departments benefit from different models, and introduce a secure orchestration platform that delivers full control over your generative AI strategy—without compromising on capability.

The Expanding Landscape – Top Google Gemini Alternatives in 2026

Google Gemini has emerged as one of the most advanced generative AI models in the market today. Known for its integration with Google Workspace, real-time search grounding, and multilingual capabilities, it continues to deliver value across a range of enterprise use cases—from engineering to content creation.

At the same time, many enterprises are broadening their AI strategy to include other models that align more closely with specific departmental needs, budget considerations, or infrastructure preferences. The shift is not about replacing Gemini, but about complementing it with additional tools that offer flexibility in deployment, control, and performance.

Below is an overview of leading large language models that enterprises are evaluating and adopting alongside or in place of Gemini, depending on the use case:

| AI Platform | Key Strengths | Common Use Cases |

|---|---|---|

| Microsoft Copilot | Seamless Microsoft 365 integration, enterprise-grade governance | Administrative tasks, project collaboration, workflows |

| Claude (Anthropic) | Long-form reasoning, privacy-conscious design | Legal documentation, HR content, policy generation |

| ChatGPT (OpenAI) | Fast, versatile, and highly extensible | Marketing, customer engagement, product support |

| Perplexity AI | Real-time research and live search integration | Knowledge work, analyst teams, customer service |

| Amazon Bedrock | Access to multiple LLMs in one platform, private deployment support | Automation, backend integration, IT infrastructure |

| IBM watsonx | Built for domain-specific AI, strong regulatory alignment | Financial services, healthcare, regulated industries |

| Open-source LLMs | Customizable, deployable on-premise or in private cloud | Internal tooling, data-sensitive workflows |

| Vertical Copilots | Tailored for industry platforms like Salesforce, SAP, and ServiceNow | CRM, ERP, service operations, supply chain |

Each of these models brings unique advantages. Some prioritize structured output or private hosting, while others focus on long-memory interactions or tone adaptability. Gemini remains a top-tier choice, particularly for organizations embedded in the Google ecosystem. However, pairing it with complementary models allows for more tailored, cost-effective, and compliant AI deployment across the enterprise.

Modern AI strategies are becoming model-agnostic. Enterprises are selecting the best tool for each task—whether that includes Gemini, GPT-4, Claude, or a specialized copilot—based on team needs, governance requirements, and long-term scalability.

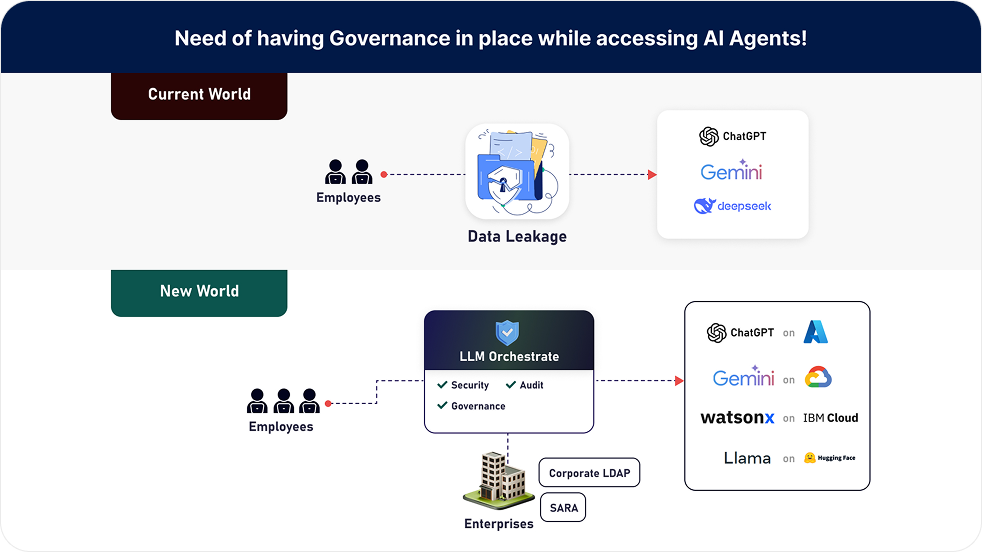

Fragmented AI = Hidden Risk

When teams adopt Gen AI in silos, three major problems emerge:

-

No Central Governance Who’s monitoring how AI is used across the business? Without oversight, legal and compliance teams cannot track whether data policies are being followed. Sensitive prompts might be sent through unsecured tools, and no one would know.

-

Shadow AI and Data Leakage Employees may copy business data into free, consumer-facing tools. Even if well-intentioned, this exposes confidential information to environments with unknown retention policies or lack of encryption.

-

Duplicate Costs, Zero Visibility Each department might pay separately for tools that do the same thing. But without a shared dashboard, IT and finance teams cannot track token usage, cost per request, or which models are actually delivering value.

This scattered landscape leads to growing concerns from CISOs, procurement heads, and compliance leaders alike. What started as innovation turns into complexity, cost, and risk.

So, the big question becomes:

What if you didn’t have to stop employees from using the tools they like- but still had complete control over how those tools are accessed, governed, and paid for?

That’s exactly what an LLM Orchestrator enables.

Instead of forcing teams into a one-model-fits-all approach, the orchestrator acts as a single control layer across all the models your teams use—whether it’s ChatGPT, Gemini, Claude, Amazon Bedrock, or open-source LLMs. It lets you plug these tools into a secure, audit-ready environment without blocking creativity or introducing overhead.

You don’t have to limit AI innovation. You just need a smarter way to manage it.

Introducing a Secure, Audit-Ready LLM Orchestrator

What if you could give every team in your organization access to the generative AI models they prefer—without compromising on governance, compliance, or cost control?

That’s exactly what an LLM Orchestrator makes possible.

Rather than being “just another chatbot interface,” the orchestrator is a centralized control platform that sits above all your generative AI tools. It does not replace ChatGPT, Gemini, Claude, or any other model—it connects them. It becomes the single pane of glass through which your enterprise accesses and manages every AI model, securely and intelligently.

Think of it as your enterprise’s AI command center.

Whether your marketing team wants to use ChatGPT, your legal team prefers Claude, or your developers integrate Gemini for coding tasks, the orchestrator enables it all from one unified platform—governed by IT, visible to compliance, and auditable by design.

What Can You Do with an LLM Orchestrator?

-

Assign the Right Model to the Right Job Use Claude for contract analysis, GPT-4 for product copy, Gemini for real-time research, and watsonx for financial reporting—all routed based on use case and sensitivity.

-

Apply Enterprise-Wide Controls Set role-based access policies, implement usage limits by team or user, and ensure every prompt is logged and monitored.

-

Maintain Full Audit Readiness Every interaction is recorded in immutable logs. Prompt history, response data, and access trails are available on-demand for audit or security review.

-

Route Sensitive Workloads to Secure Models You can choose to run certain prompts through models hosted in your own environment, such as Google Gemini, Amazon Bedrock or IBM watsonx, while less sensitive tasks can go through hosted LLMs.

Botonomics: Smarter Cost Control for Scalable Google Gemini Alternatives

Google Gemini is a powerful enterprise-grade AI model—but scaling it across your entire organization can get expensive fast. With fixed seat-based licensing, limited integration flexibility, and minimal visibility into usage, costs can quickly spiral beyond control. That’s where Botonomics comes in. At the core is an LLM Orchestrator that connects your teams to Google Gemini and other leading models like ChatGPT (via Azure), Claude, Amazon Bedrock, IBM watsonx, and even open-source LLMs—all through a single, governed platform.

-

One Platform, Multiple Models Teams can use the best model for their task—Gemini for real-time research, Claude for policy writing, or watsonx for financial workflows—without juggling tools or credentials.

-

Token Based, Usage Aligned Pricing You only pay for what’s used. Lighter requests can be routed to more affordable models, while high-priority tasks access premium options like Gemini.

-

Real-Time Analytics and Spend Visibility Monitor model usage across departments. Track cost, adoption trends, and performance from a unified dashboard.

-

Risk-Based Redaction and Policy Enforcement Sensitive content is automatically masked or filtered based on user roles and context.

-

Unified Audit Logging Maintain compliance with immutable records of every AI interaction, across all LLMs.

-

Shadow AI Control Bring unsanctioned tools into a centralized, auditable environment—without disrupting workflows.

The outcome

you retain full access to Google Gemini where it matters most—while dramatically improving flexibility, compliance, and cost control across your AI stack.

That’s the promise of orchestrated AI.

The LLM Orchestrator enables:

-

Unified Access to All Leading LLMs Gain secure, policy-controlled access to GPT (via Azure), Claude, Gemini, Amazon Bedrock, watsonx, and open-source models all through a single interface.cNo silos, no redundant integrations just unified, governed AI access.

-

Token Based, Usage Aligned Pricing Move beyond seat licenses. With token-based pricing, you pay only for what’s used. Route basic tasks to lightweight models and reserve premium models for high value scenarios. This ensures maximum efficiency and cost control without sacrificing capability.

-

Smart Model Routing by Use Case Assign the right model for each workflow based on reasoning depth, privacy requirements, or business function. Legal teams, support agents, analysts all get the best fit AI, without compromise.

-

Real Time Cost and Usage Visibility Monitor consumption by team, model, or task. Analyze usage trends, track spend per token, and generate reports for finance, compliance, and security reviews.

Whether aligning with GDPR, HIPAA, SOX, or NIST, it keeps your AI usage secure and auditable without slowing teams down.

Deploy it in the cloud, on-prem, or hybrid—it adapts to your infrastructure. IT stays in control, legal gets visibility, and business users access GenAI confidently and compliantly.

No blind spots. No shadow AI. Just safe, scalable innovation.

One Dashboard for GenAI Oversight Across the Enterprise

As more teams start using generative AI, it gets harder to see the full picture. Who’s using which model? How much is it costing? Are the right policies being followed?

An LLM Orchestrator brings everything together in one place. It gives you a single dashboard to monitor usage across departments, track consumption, set limits, and flag unusual activity. You don’t have to chase down data or switch between tools—everything is visible and manageable from one central view.

It’s not just about keeping things organized. It’s about gaining the confidence to scale AI across your business without losing control.

Built to Plug Into the Tools You Already Use

Your teams don’t need more tools—they need smarter ones in the tools they already use. That’s where the orchestrator fits in.

It connects Google Gemini and other top models directly into your existing platforms—Slack, Microsoft Teams, Salesforce, ServiceNow, or internal portals—so AI becomes part of your daily workflow, not another tab to manage.

It also works with your identity systems like SAML and LDAP, making access secure and role-based from day one.

You choose the deployment—cloud, on-prem, or hybrid. The orchestrator doesn’t replace your tech stack. It upgrades it. Seamless, secure, and exactly where your teams already are.

FAQs

Take the Next Step

If you’re exploring how to scale Google Gemini—or any Gen AI model—across your organization without overspending or losing control, now’s the time to act smarter.

An LLM Orchestrator gives you the best of both worlds: access to world-class models like Gemini, GPT-4, Claude, and more, combined with centralized control, audit readiness, and cost optimization.

No more silos. No more tool sprawl. Just one intelligent platform built for scale, security, and flexibility.

Ready to see it in action?

Try the Orchestrator Demo – Experience multi-model orchestration firsthand.

Get Your Generative AI Governance Plan – Custom – tailored to your compliance, industry, and IT goals.

Talk to an AI Compliance Specialist – Get answers on deployment, security, and scaling AI responsibly.