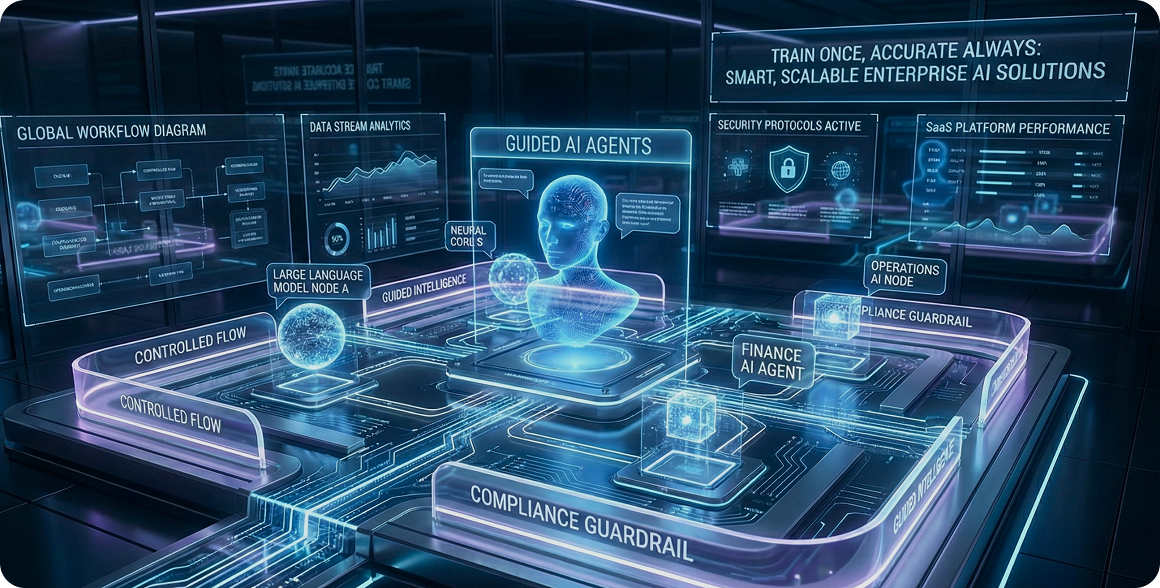

Guided AI Agents

Train Once, Accurate Always — A Faster Path to Enterprise AI

Introduction

Why Enterprise AI Agent Projects Take Too Long

Enterprise AI has reached an inflection point. Most CIOs and technology leaders have moved past debating whether to adopt AI and are now focused on execution: building AI agents that can reduce cost, improve operational throughput, and deliver measurable outcomes across service, operations, risk, and IT.

And yet, many AI agent initiatives still take far longer than expected.

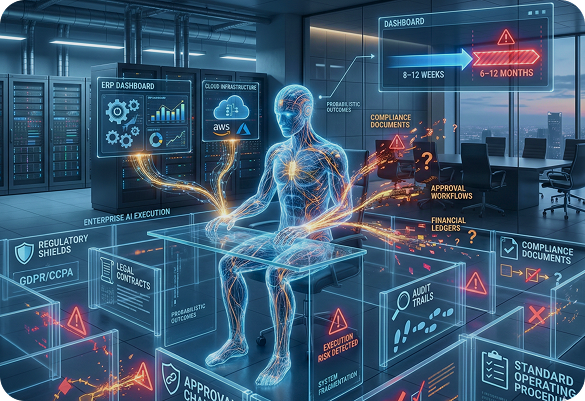

A concept that seems straightforward in a pilot—“build an agent that can handle requests end-to-end”—often turns into a complex, slow-moving implementation program. Projects that were expected to go live in 8–12 weeks stretch into 6–12 months. The scope expands. The maintenance effort grows. Teams discover that the agent is “working” in demos but unreliable in production.

This is not because enterprises lack talent, investment, or intent. It happens because AI agents introduce a hidden complexity that is easy to underestimate until the organization attempts to operationalize them.

The hidden complexity behind “intelligent” AI agents

Traditional AI agent development—especially when built programmatically and integrated with Large Language Models (LLMs)—creates an illusion of speed early on. Teams can assemble a prototype quickly using prompts, orchestration frameworks, connectors, and a basic workflow. Within days, the agent might generate reasonable answers, complete a few tasks, and even appear to “understand” internal requests.

But enterprise-grade success requires far more than generating a correct response.

Real-world enterprise AI agents must handle:

Ambiguous user intent and incomplete requests

Strict role-based access control and authentication

Policy constraints (legal, HR, procurement, finance)

Exceptions, escalations, and approvals

Changing systems, processes, and organizational rules

Repeatable performance under real usage volume

The more an AI agent is expected to execute work—rather than answer questions—the more it must operate reliably within enterprise constraints.

Why most enterprises underestimate training, supervision, and maintenance

One reason projects run long is that many enterprises treat AI agents like conventional software automation. The assumption is: build it once, monitor it lightly, and improve it occasionally.

In practice, most “traditional AI agents” require constant correction and continuous supervision. They depend on ongoing prompt tuning, retraining cycles, and human-in-the-loop review because the agent is operating inside probabilistic systems.

And probabilistic systems do not behave like deterministic enterprise software.

This gap becomes obvious after launch. Even if accuracy starts strong, it can degrade as soon as policies change, knowledge evolves, workflows shift, or new edge cases appear. Teams begin adding oversight layers, escalating exceptions, and revisiting training data weekly or monthly. Over time, training and supervision become a permanent operational workload.

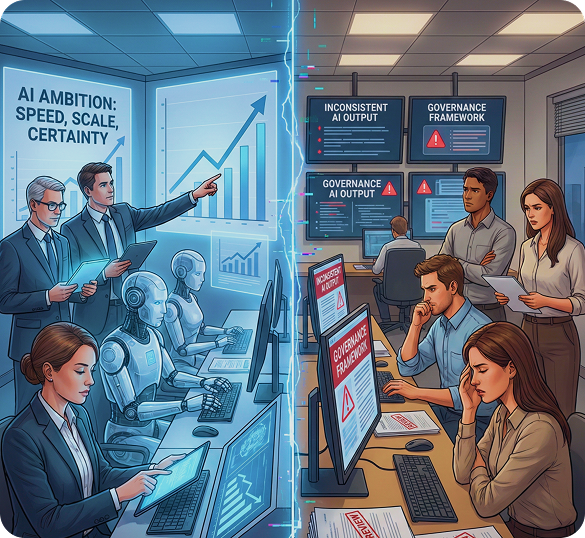

The growing gap between AI ambition and operational reality

Many leadership teams have set ambitious AI roadmaps—automation at scale, workflow acceleration, reduced support costs, faster decision cycles. But that ambition often collides with delivery reality:

Agents don’t behave consistently across departments

Legal and compliance teams hesitate to sign off

IT cannot certify agent outcomes under governance standards

Business teams lose trust when answers vary from one day to the next

McKinsey has noted that while AI adoption is accelerating, capturing value depends heavily on execution discipline, operating models, and how effectively AI is embedded into workflows rather than treated as a standalone tool (McKinsey).

This is where a more practical enterprise approach becomes essential.

The Real Cost of Training AI Agents

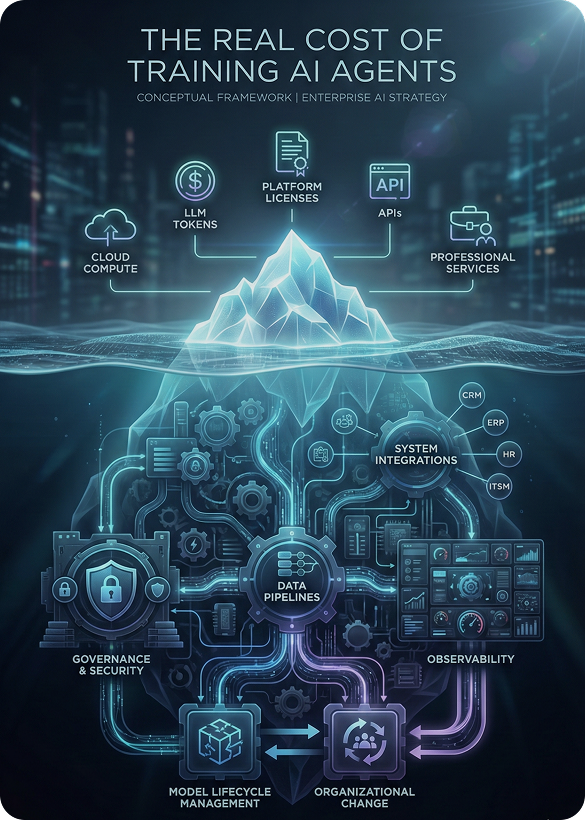

When organizations assess the cost of AI agent initiatives, they often focus on the visible budget line items: cloud usage, platform licensing, and professional services. But the true cost is broader—and the most expensive part is frequently invisible.

Hardware, software, and services: the visible costs

The obvious costs include:

LLM usage and token consumption (especially at scale)

Cloud infrastructure for hosting orchestration, vector databases, observability, and security layers

AI platforms such as Azure OpenAI, Amazon Bedrock, IBM watsonx, or Google Gemini integrations

Integration development for enterprise systems (CRM, ERP, HRIS, ITSM, ECM)

External services for architecture, model customization, and implementation

IDC and Gartner both point out that enterprise AI costs rarely stay confined to model usage alone. Real-world deployments require investment in data pipelines, governance, integration, and lifecycle management (IDC, Gartner).

The invisible cost: people, time, and organizational drag

More than infrastructure, the hidden cost is the human effort required to make the agent dependable.

Common ongoing demands include:

Data preparation and knowledge refinement

Continuous evaluation and testing

Supervision for edge cases and exceptions

SME (subject matter expert) time to validate outputs

Security and compliance review cycles

Change management coordination across departments

This “invisible work” compounds because AI agents interact with systems and processes that are constantly evolving.

PwC has emphasized that many organizations underestimate the operational effort required to sustain AI performance and governance over time, especially when AI is embedded into mission-critical processes (PwC).

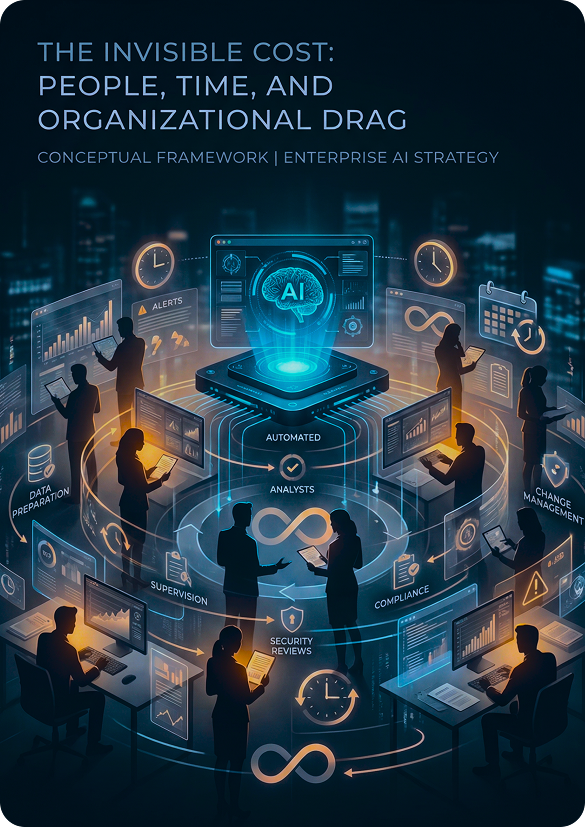

Why supervised training becomes a long-running program, not a project

Enterprises quickly discover that traditional AI agents behave like systems that require “constant attention.” Even if a supervised training approach is applied initially, new business rules and operational conditions keep triggering retraining requirements.

Instead of being delivered like a project with a go-live date, AI agents often become a long-running program:

Train → Deploy → Monitor → Correct → Retrain → Repeat

This cycle becomes expensive not only financially, but also in terms of organizational bandwidth. It draws in IT, risk, compliance, operations, SMEs, and support teams on a recurring basis.

When that happens, scaling beyond a few use cases becomes difficult.

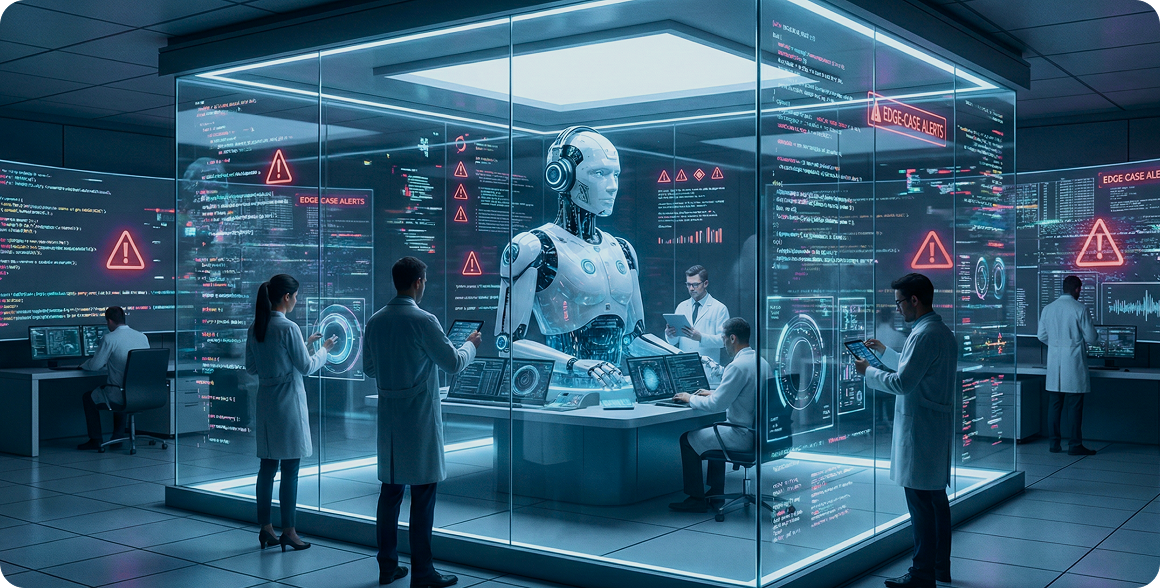

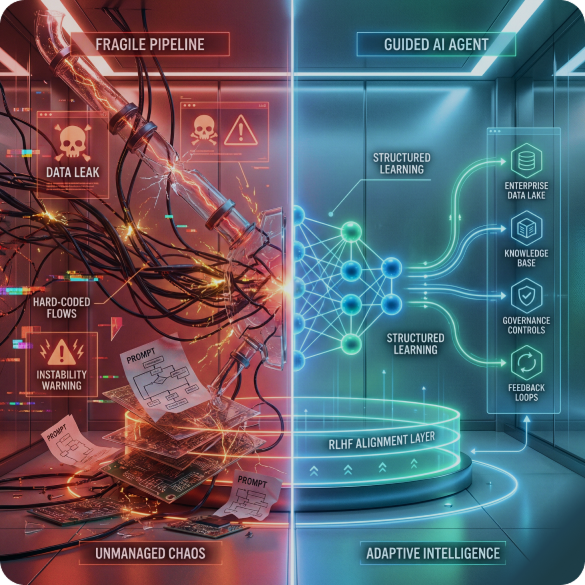

Why Traditional AI Agents Depend on Continuous Supervision

Most AI agents today are built in one of two ways:

Programmatically orchestrated logic + LLM responses

LLM-based agents with tool calling and dynamic exploration

Both rely heavily on probabilistic behavior from LLMs. This is where ongoing supervision becomes unavoidable in many deployments.

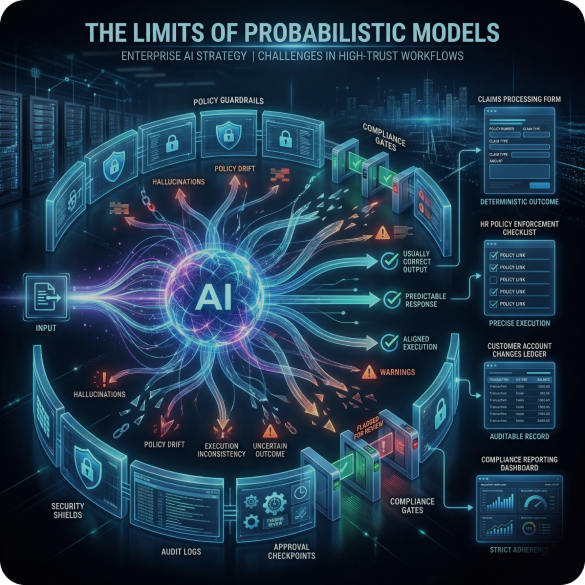

The limits of probabilistic models in enterprise environments

LLMs are not deterministic. They generate outputs based on probability distributions influenced by prompt context and learned patterns. That makes them excellent for language tasks, summarization, classification, and drafting.

But it creates challenges for enterprise execution:

Output variability across similar inputs

Hallucinations when context is incomplete

Inconsistent adherence to policy constraints

Difficulty guaranteeing step-by-step execution accuracy

In high-trust workflows—claims processing, HR policy enforcement, customer account changes, compliance reporting—enterprises cannot accept “usually correct.” They need predictability.

Gartner has repeatedly highlighted that trust, risk, and security management are central barriers to scaling AI, especially in regulated industries (Gartner).

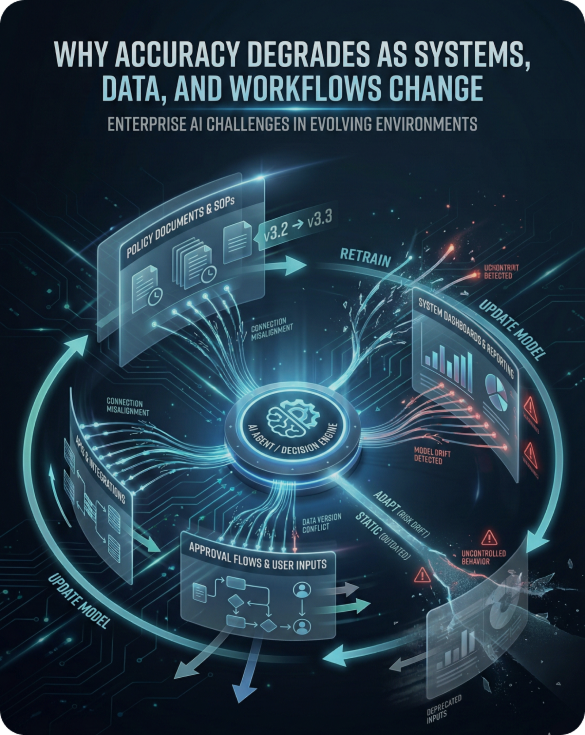

Why accuracy degrades as systems, data, and workflows change

Even if a traditional AI agent performs well initially, accuracy can degrade over time due to:

Knowledge changes: updated SOPs, new product rules, revised policies

System changes: UI updates, API changes, new integrations

Process changes: approval steps, thresholds, compliance conditions

User behavior changes: new phrasing, different expectations, new intents

The agent is forced to operate in a moving environment. If it is “learning” continuously, it can drift. If it is not updated, it becomes outdated.

This is why retraining loops become inevitable.

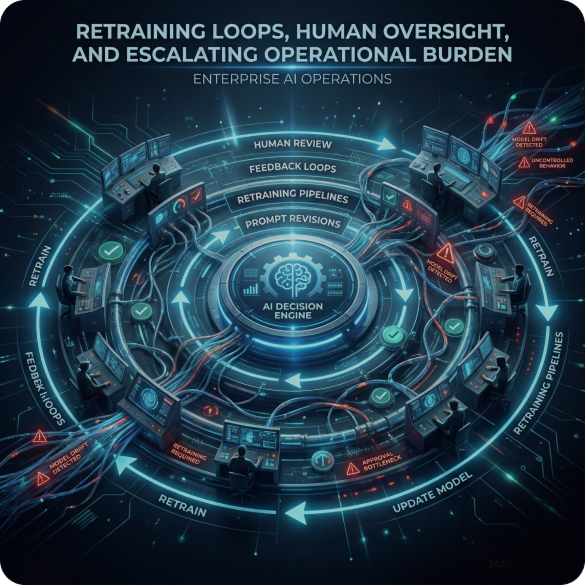

Retraining loops, human oversight, and escalating operational burden

To keep traditional AI agents accurate, teams add:

Human validation layers

Feedback collection systems

Retraining pipelines

Prompt revision cycles

Safety rules and exception handling logic

Over time, enterprises build an entire operational structure around managing AI behavior

Deloitte research has frequently highlighted that enterprise AI success depends not only on models but on strong operating frameworks that manage change, risk, and accountability (Deloitte).

However, when the operational overhead grows faster than the benefits, stakeholders start questioning ROI.

The Enterprise Problem: When “Learning” Becomes a Liability

Continuous learning sounds attractive in theory. Many AI narratives imply that systems will “get smarter over time” and require less intervention.

In enterprise settings, the opposite often happens.

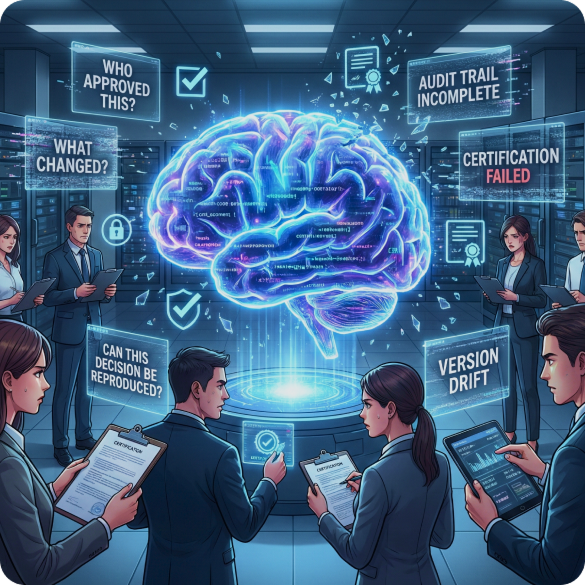

Why constantly learning agents are hard to govern

When AI agents continuously adapt, enterprises struggle to answer basic governance questions:

Who approved this behavior?

What changed between last month and today?

Why did the agent make this decision now but not before?

Can we reproduce outcomes for audit review?

In a regulated environment, AI systems must be explainable, certifiable, and accountable.

Auditability, traceability, and compliance challenges

Audit requirements typically demand:

Traceable reasoning or decision records

Evidence of policy adherence

Role-based access enforcement

Repeatable outcomes for investigations

Clear escalation paths for exceptions

Adaptive agents can create “behavior drift” that undermines audit confidence. Even if drift improves performance in some cases, it increases uncertainty in others.

Forrester has consistently discussed that enterprise AI governance requires traceability, lifecycle control, and strong monitoring—not just model quality (Forrester).

Why IT teams struggle to certify and operationalize adaptive agents

IT leaders are often asked to certify AI agents like they would certify other systems:

Security assurance

Reliability and uptime

Controlled change management

Versioning and release processes

Integration stability

But adaptive behavior makes certification difficult. When behavior changes dynamically, operational teams cannot easily define “expected output.”

This creates a blocker: business teams want autonomy, while IT needs predictability.

So the question becomes:

Is there a way to build AI agents that behave with enterprise-grade reliability—without endless training cycles?

A Different Approach: From Training AI to Guiding AI

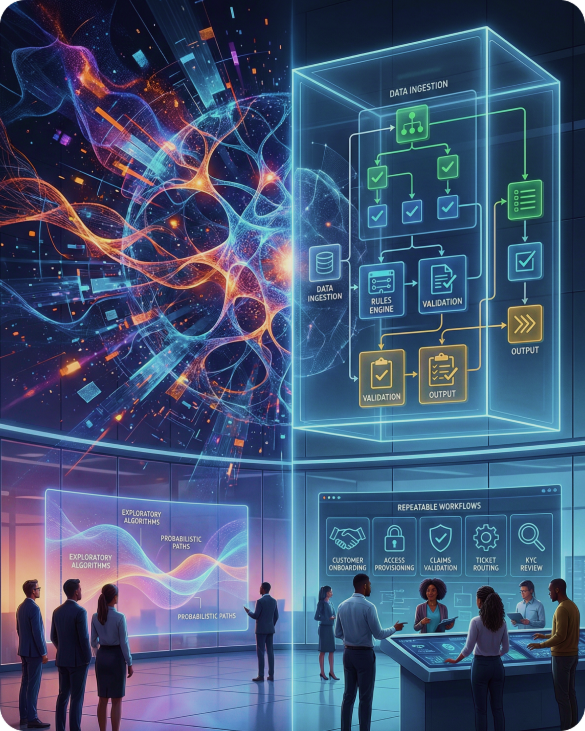

Most enterprises do not actually need agents that “learn continuously.”

They need agents that execute reliably.

This requires a shift away from unbounded, probabilistic exploration and toward structured execution guided by enterprise-defined guardrails.

Why enterprises don’t actually need “supervised-learning” agents

Many operational workflows are known and repeatable:

Customer onboarding

Employee access provisioning

Claims intake validation

Ticket triage and routing

Policy-driven exception handling

KYC documentation review

FAQ + action completion workflows

These processes do not require creativity. They require accuracy and consistency.

In other words, enterprises should not optimize for an agent that “discovers” how to do work. They should optimize for an agent that executes work within defined rules.

Training models on enterprise data to build predictable outcomes

Predictability improves dramatically when AI agents are grounded in enterprise-specific reality:

Existing conversations and historical resolutions

Standard operating procedures (SOPs)

Knowledge articles and policy documents

System behavior patterns and process flows

Approved templates and decision rules

Using enterprise data to train or align custom models produces more controlled and accurate behavior than relying solely on generic LLM responses.

This creates a blocker: business teams want autonomy, while IT needs predictability.

The self-driving car analogy: fast, but knows when to stop

A useful mental model is a self-driving car.

It moves fast, but it does not “freely explore” the road. It operates within:

Clear lanes and boundaries

Traffic rules

Stopping conditions

Safe turning rules

Exception handling mechanisms

Enterprise AI agents should be designed the same way.

They should move quickly, but stay on rails.

This is the core idea behind Guided AI Agents.

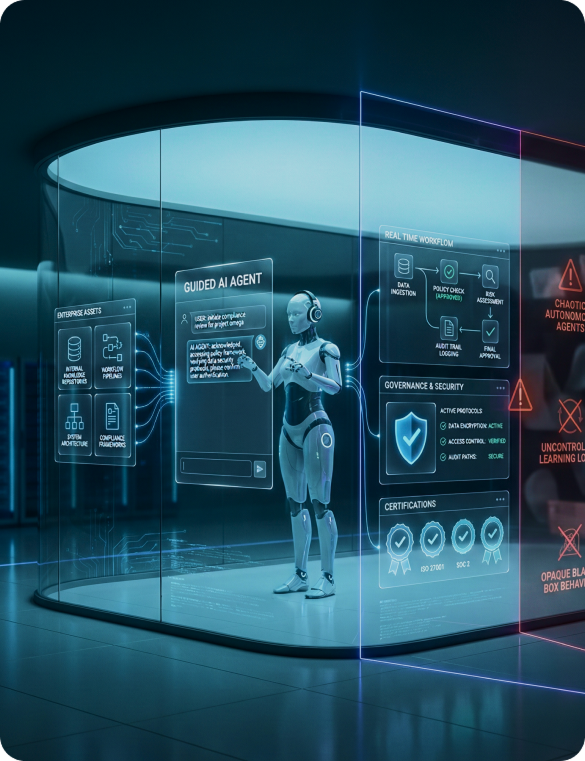

Introducing Guided AI Agents

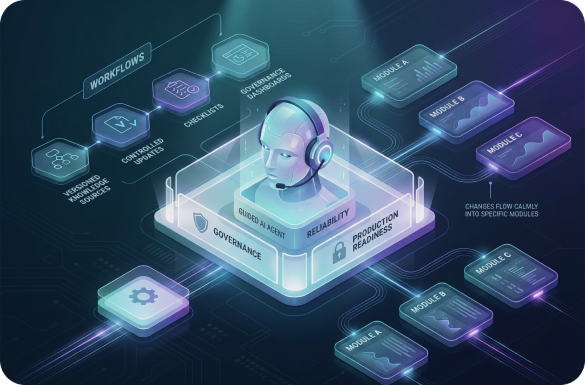

Guided AI Agents are an enterprise approach to agentic AI that prioritizes structure, guardrails, repeatable execution, and operational reliability.

They are designed to be configured once, aligned to enterprise processes, and deployed with stable performance over time.

What Guided AI Agents are (and what they are not)

Guided AI Agents are:

AI agents trained and aligned on enterprise knowledge, workflows, and system behavior

Designed to follow defined steps, boundaries, and policies

Built for execution in real enterprise environments

Governable, auditable, and operationally certifiable

Guided AI Agents are not:

Open-ended autonomous agents that “learn freely” in production

Systems that continuously drift based on uncontrolled feedback loops

Black-box agents with unpredictable decision patterns

This model enables a practical, compliance-friendly approach to AI adoption.

Training your AI agent on custom models, documents, SOPs, and systems

Instead of relying only on prompts and dynamic reasoning, Guided AI Agents use enterprise assets as foundational alignment inputs:

Knowledge sources (documents, SOPs, internal policies)

Structured process steps

Approved resolution patterns from existing interactions

System-of-record integration constraints

This creates an agent that behaves like an operational capability—not a lab experiment.

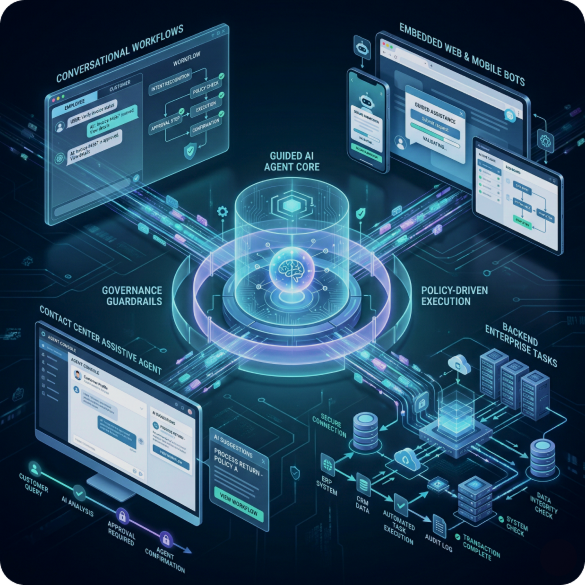

Guided AI chatbot, Guided bots, and guided execution across channels

Guided AI Agents can power multiple interfaces, including:

A Guided AI chatbot for employee and customer workflows

Guided bots embedded in portals and mobile applications

Assistive agents integrated into contact center desktops

Backend agents executing tasks via enterprise systems

The user experience can remain conversational, but the execution stays structured.

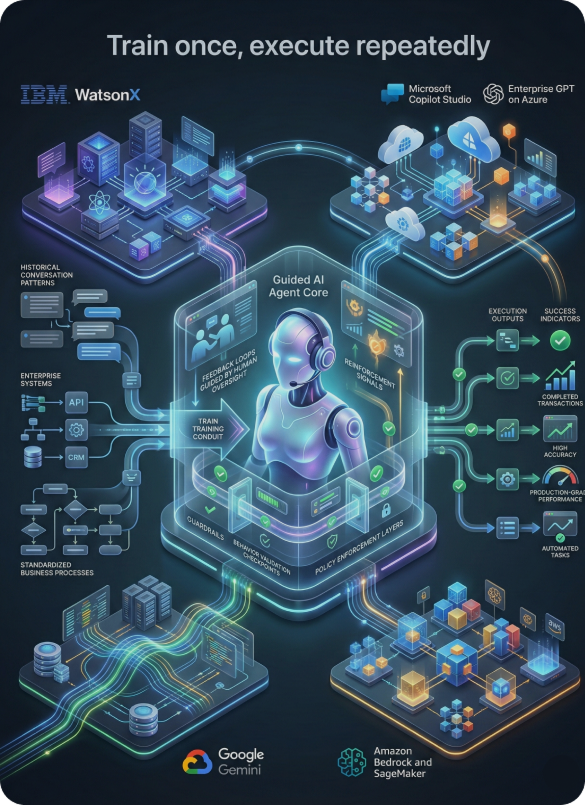

Smart Guided AI Agents built for enterprise platforms

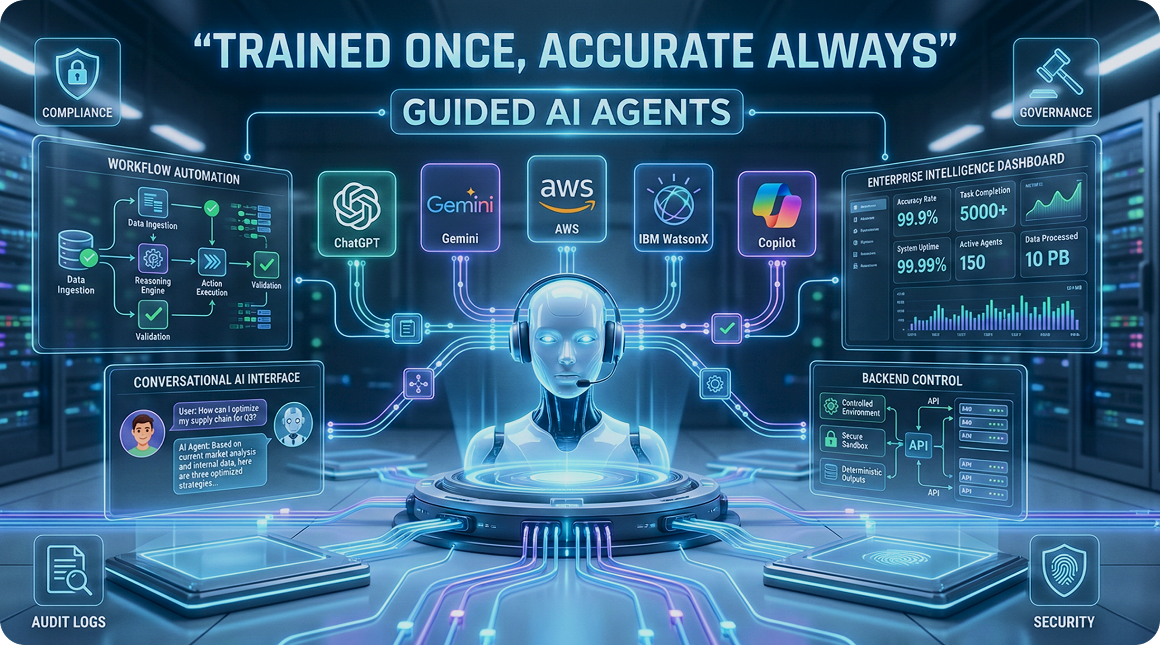

Streebo, an AI Company, provides enterprise-ready, pre-trained Guided AI Agents that are designed to accelerate deployment while maintaining governance and reliability.

We assemble AI Agents built on enterprise-grade platforms including:

These agents are aligned using existing conversations, systems, and processes—so enterprises can train once and achieve repeatable execution outcomes.

Leveraging best practices from IBM, Microsoft, AWS, and Google, We apply advanced techniques such as RLHF (Reinforcement Learning from Human Feedback) to help enterprises define guardrails, validate behavior, and reach production-grade reliability.

Once trained, these Guided AI Agents are designed to operate with 99%+ accuracy across defined workflows—without requiring endless retraining cycles.

How Guided AI Agents Are Trained Once – and Stay Accurate

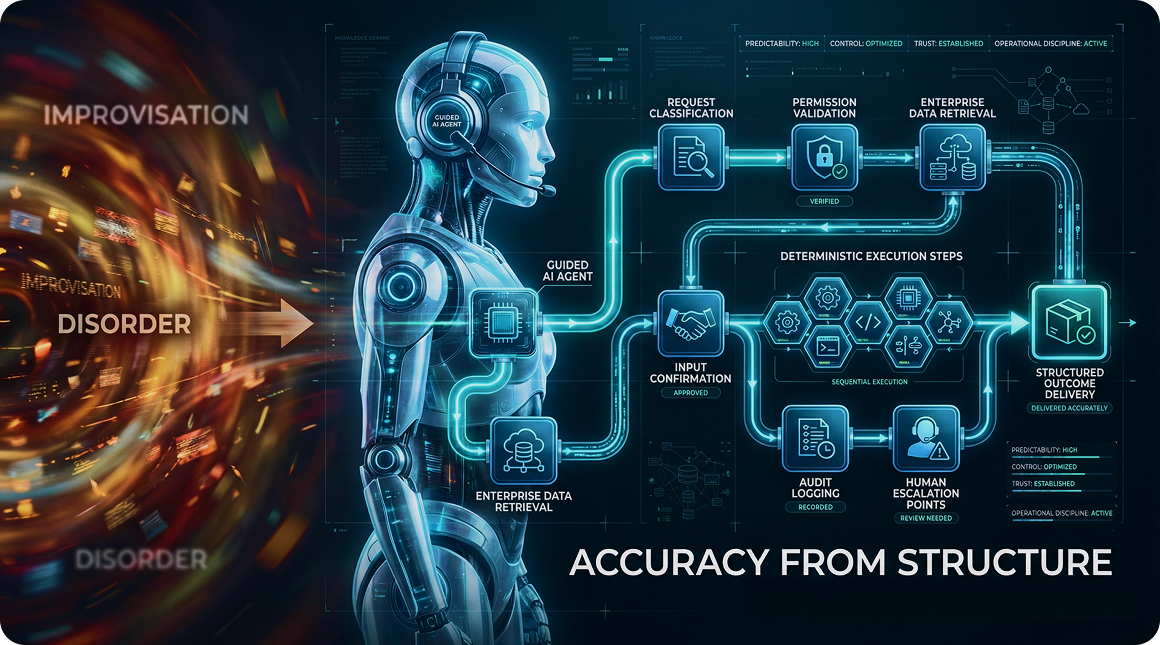

The defining advantage of Guided AI Agents is that accuracy comes from structure, not ongoing correction.

Rather than treating every interaction as a fresh reasoning challenge, guided agents execute consistent paths based on enterprise-defined workflows.

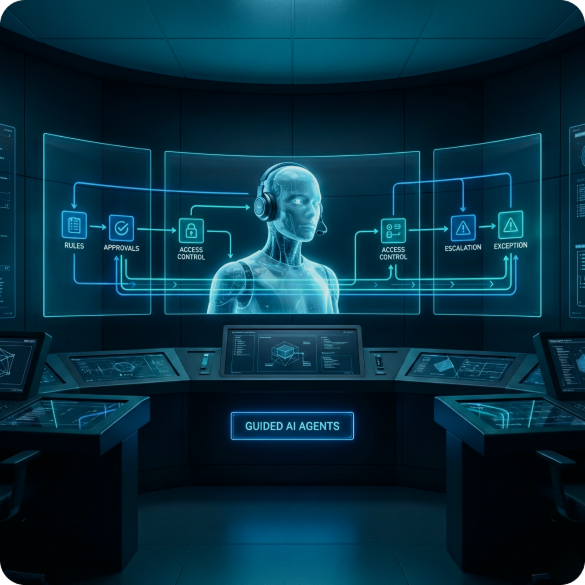

Initial guided alignment: defining steps, rules, and boundaries

The initial setup focuses on alignment—not endless iteration.

Enterprises define:

The workflow steps the agent must follow

The rules that govern execution

The permitted systems and actions

Escalation conditions and approvals

Exception handling boundaries

This creates a stable operating framework.

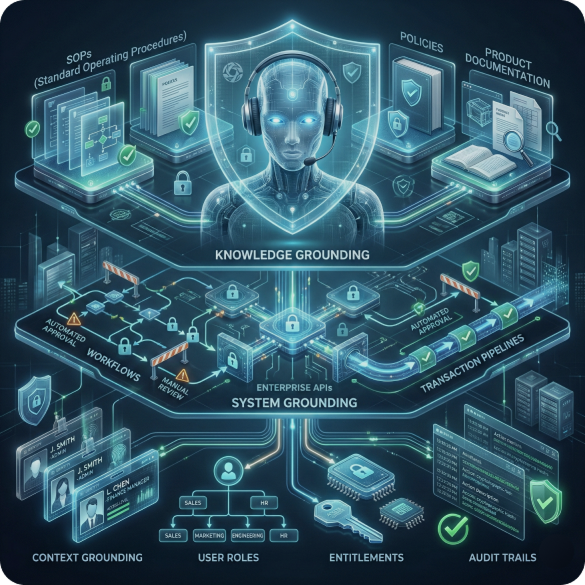

Grounding agents in enterprise data, knowledge, and systems

Guided AI Agents are grounded in enterprise reality through:

Knowledge grounding: SOPs, policies, product documentation

System grounding: approved APIs, workflows, and transaction constraints

Context grounding: user role, business unit, entitlement, audit requirements

When the agent answers or acts, it does so within the boundaries of verified enterprise context.

Why accuracy comes from structure, not continuous learning

Traditional agents attempt to “think” their way to an answer every time. Guided AI Agents execute consistent logic supported by enterprise knowledge. This improves accuracy because the agent is not improvising. It is executing.

Step-by-step flow (in prose): how guided execution works

A Guided AI Agent typically operates through a repeatable sequence:

Classify the request into a known workflow category

Validate eligibility (role, permissions, prerequisites)

Fetch required context from enterprise systems and knowledge sources

Confirm required inputs if information is missing

Execute defined steps in the workflow path

Log actions and rationale for audit traceability

Escalate exceptions to human teams when boundaries are reached

Close the loop with a structured outcome and confirmation

This design supports both conversational engagement and enterprise-grade predictability.

Guided Execution vs Continuous Learning

To understand why guided approaches scale better, it helps to compare the operational realities of both models.

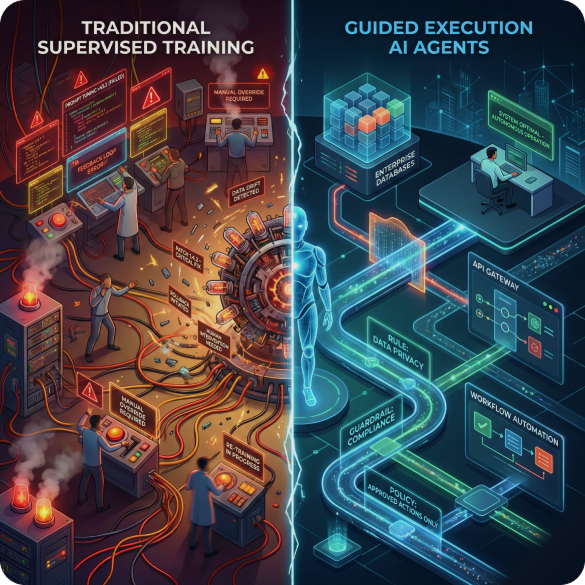

Building AI agents with supervised training vs guiding execution

Traditional approaches often rely on:

Continuous supervised learning cycles

Prompt tuning and patching

Manual oversight for variance control

Guided AI agents focus on:

One-time guided alignment

Stable execution rules

Repeatable workflows anchored to enterprise systems

Using brittle code vs training with enterprise data and RLHF

Many AI implementations become brittle because teams combine:

Hard-coded orchestration

Complex prompt stacks

Unbounded LLM behavior

Guided AI Agents balance flexibility with control by using enterprise data and advanced alignment techniques such as RLHF, which improves adherence to desired behavior patterns while maintaining natural language interaction.

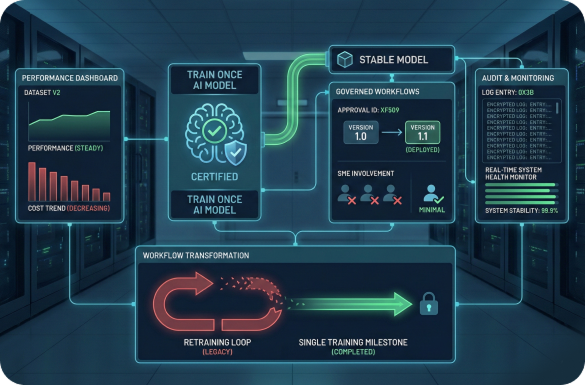

Static retraining cycles vs stable, repeatable workflows

In continuous-learning models, change triggers retraining.

In guided models, change triggers controlled updates to workflows or knowledge sources—similar to how enterprises manage process updates today.

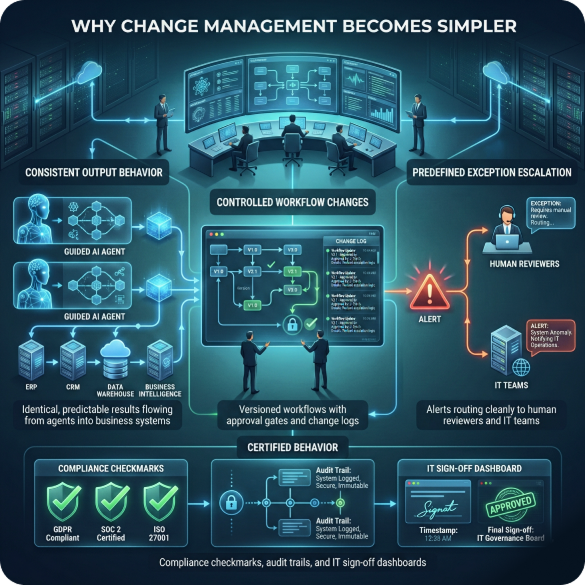

Why change management becomes simpler, not harder

Guided AI Agents reduce operational complexity because:

Output behavior is consistent

Workflow changes are controlled

Exceptions follow predefined escalation routes

IT can certify behavior like any other enterprise system

This makes guided AI adoption more compatible with established enterprise governance models.

Why Guided AI Agents Reduce Cost and Complexity

Enterprise AI success is ultimately measured by delivery speed, ongoing operational cost, and scalability.

Guided AI Agents reduce cost by eliminating the need for perpetual retraining and supervision.

Eliminating repeated supervised training cycles

The “train once” approach reduces:

Ongoing SME time for revalidation

Repeated evaluation and retraining efforts

Constant corrections and patchwork prompt updates

This translates into lower operational overhead and fewer hidden costs.

This model enables a practical, compliance-friendly approach to AI adoption.

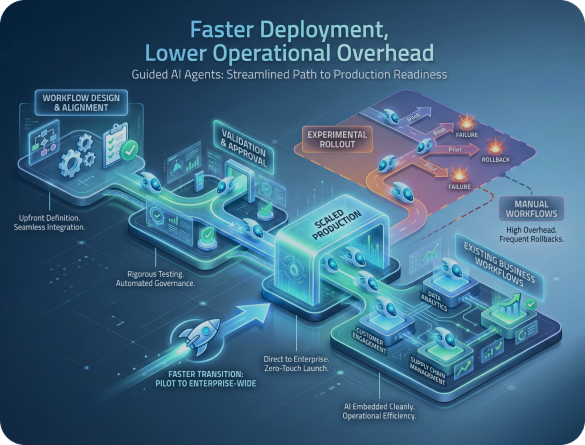

Faster deployment and lower operational overhead

Guided AI Agents accelerate deployment because workflow definition and alignment are done upfront, rather than discovered through production failures.

Organizations can move faster because they are implementing known processes—not experimenting in production.

McKinsey and Deloitte have both highlighted that value realization depends on how quickly AI moves from pilot to scaled production deployment and how effectively it is embedded into business workflows (McKinsey, Deloitte).

Scaling agents without scaling human supervision

Traditional AI scaling often looks like this:

More agents → more exceptions → more supervision → more cost

Guided AI scaling behaves differently:

More agents → reuse guided workflows → consistent behavior → controlled oversight

This enables scaling without linear growth in operational staffing.

Business Impact of Guided AI Agents

Guided AI Agents are not about novelty. They are about outcomes.

Weeks-to-value instead of months

With guided alignment and reusable workflow structures, enterprises can reduce the time required to deploy AI agents across multiple departments.

Instead of building each agent from scratch, guided patterns can be replicated and extended

Consistent accuracy across workflows and systems

Guided AI Agents can deliver consistent outcomes across:

IT service management

HR support

Customer service workflows

Policy-guided operations

Compliance-controlled task execution

This consistency is often the deciding factor for scaling beyond pilots.

Improved confidence for IT, compliance, and business teams

When agents behave predictably, stakeholders gain confidence:

IT gains deploy ability and operational control

Compliance gains traceability and audit confidence/p>

Business gains reliability and faster service delivery

This trust unlocks broader adoption.

The Future of Enterprise AI: Guided, Governed, and Executable

The next phase of enterprise AI will not be defined by who can generate the most content. It will be defined by who can execute work reliably.

From AI that answers questions to AI that executes work

Enterprises are moving beyond “AI as a search layer” toward “AI as an operational layer.”

That means AI must:

Trigger workflows

Validate conditions

Perform system actions

Record decisions

Enforce policy constraints

Execution, not generation, becomes the differentiator.

Agentic AI as a configured enterprise capability

Agentic AI should be treated as a capability that is configured once and reused—similar to how enterprises configure ERP modules, ITSM workflows, or security policies. This is the logic behind Guided AI Agents.

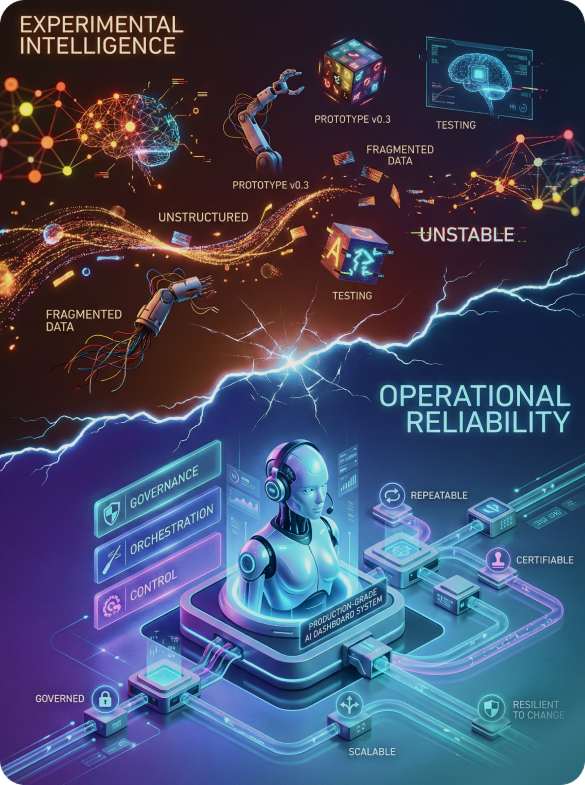

Moving from experimental intelligence to operational reliability

The organizations that succeed will not be the ones with the most prototypes. They will be the ones that build AI systems that operate like enterprise systems:

governed

certifiable

repeatable

scalable

resilient to change

That is the future direction: guided, governed, and executable AI.

Conclusion

From Endless Training to Predictable Outcomes

Enterprise AI agents often struggle to scale because they require ongoing training, supervision, and fixes. The probabilistic nature of LLMs—combined with purely programmatic agent design—creates variability that’s difficult to govern in production.

Guided AI Agents solve this by shifting from continuous retraining to one-time alignment, and from open-ended behavior to structured execution within enterprise guardrails—delivering predictable, auditable outcomes.

Why “trained once, accurate always” matters

Enterprises don’t want never-ending AI initiatives. They need capabilities that can be deployed, trusted, and scaled sustainably.

A practical path for IT and compliance With Guided AI Agents:

AI stays conversational

governance stays enforceable

workflows stay controlled

outcomes stay consistent

The fastest route to enterprise AI value is not constantly changing agents—it’s guided execution that performs reliably at scale.

FAQs

How much supervision is required initially

Initial supervision is required during guided alignment to validate workflows, define boundaries, and confirm correct behavior. After deployment, supervision is significantly lower than traditional AI agents because outcomes are stable and repeatable within defined guardrails.

What happens when systems or workflows change?

Guided AI Agents are designed to handle change through controlled updates—such as workflow adjustments, rule updates, or knowledge refresh—rather than unpredictable continuous learning. This makes change management simpler and easier to certify.

How does governance and auditability work?

Guided AI Agents support governance through structured workflows, logged actions, and enforceable boundaries. This enables traceability, repeatability, and clearer compliance validation compared to adaptive agents that drift over time.

Build Guided AI Agents—Faster and Safer

If your enterprise is exploring AI agents but struggling with timelines, supervision overhead, or governance complexity, start small and execute with control.

- Start with a single workflow or system.

- Experience one-time alignment and repeatable execution.

- See how Guided AI Agents shorten your AI roadmap—without sacrificing reliability.